All IT professionals have to deal with firewalls in some capacity or another. Protecting communication with servers is a fundamental component of any security plan. By default, whenever we install SQL Server we will need to do two things to allow us to talk to our SQL Servers: enable communication (usually TCP) and create a firewall rule allowing inbound traffic. Performing these steps are so standard that it only makes sense to automate them.

Enabling TCP communication is usually something handled within the SQL setup. It is one of the command line options, so this means we can include it in a config.ini and consistently enable it as part of our setup. Firewall rules are an OS operation, though, and are not part of the SQL Server setup. There have been command line options for a long while courtesy of netsh, but since Powershell 3 we’ve also had New-NetFirewallRule to execute this task:

New-NetFirewallRule -DisplayName "Allow SQL Server" -Direction Inbound –LocalPort 1433 -Protocol TCP -Action Allow

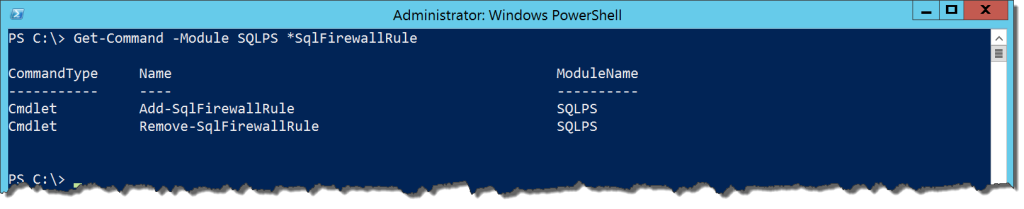

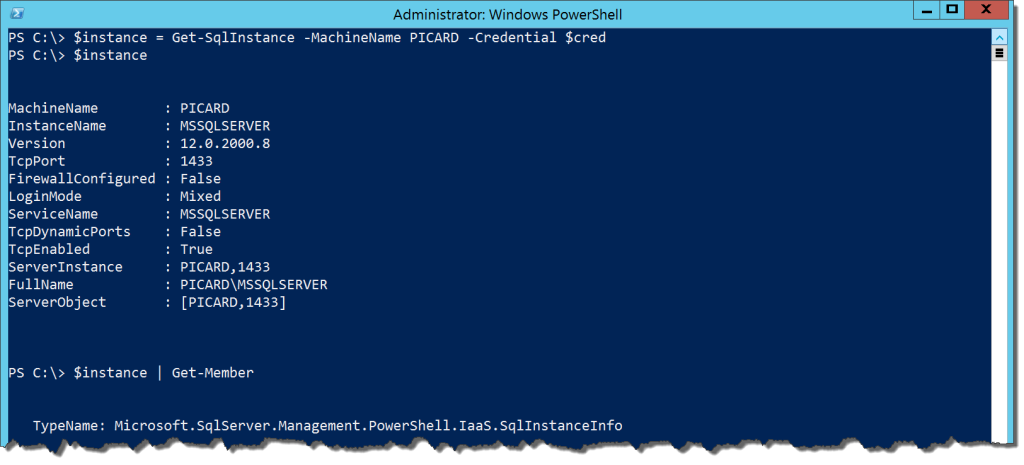

Respectively, these cmdlets will create and remove a firewall rule for SQL Server. The help file shows only a handful of parameters to use. It also states a dependency on our friend, the Cloud Adapter Service. So let’s see how it works.

The command is easy enough to run:

Add-SqlFirewallRule -ServerInstance PICARD -Credential $cred

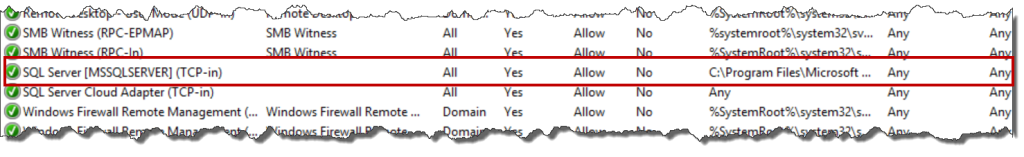

All we need to make this happen is an instance name and credential that has enough rights on the host machine to create a firewall rule. We could use an SMO Server object or a provider path to the instance. With any of these options, what we get is a firewall rule to the SQL Server application for that named instance:

This is actually an extremely convenient way to allow firewall access for our instance. Because it allows access specifically for the application executable, it helps minimize port management. However, that’s about all the control you get. The rule will always be for all your profiles, meaning you are opening access to anyone who can reach the machine. This kind of blanket security change could easily expose your data in a way you were not planning on.

Removing a firewall rule does not work quite as expected. Running Remove-SqlFirewallRule will not remove your firewall rule, only disable it. This is not such hassle, but nice to be aware of. If you add it back, the rule just gets re-enabled and there are not any naming conflicts.

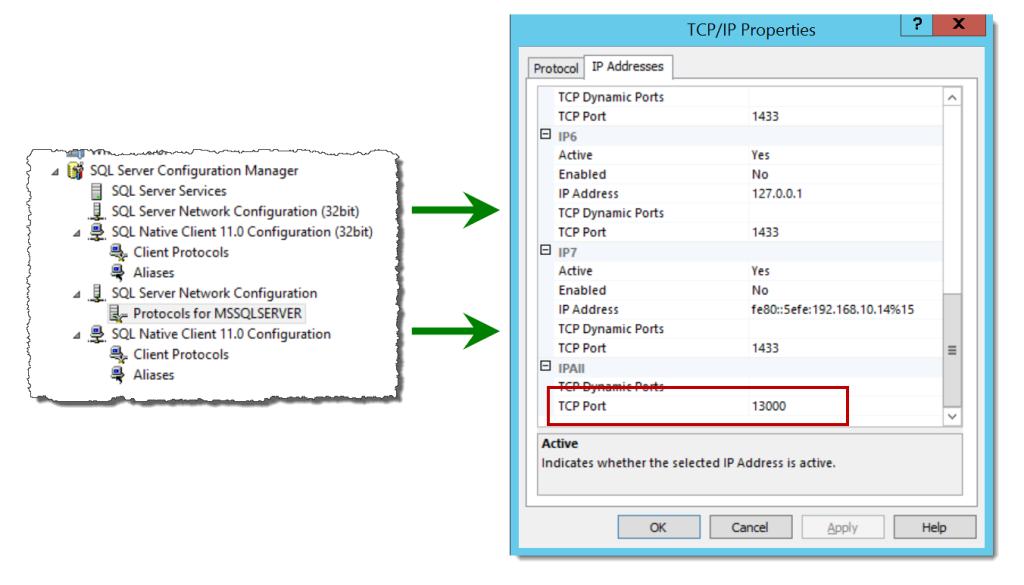

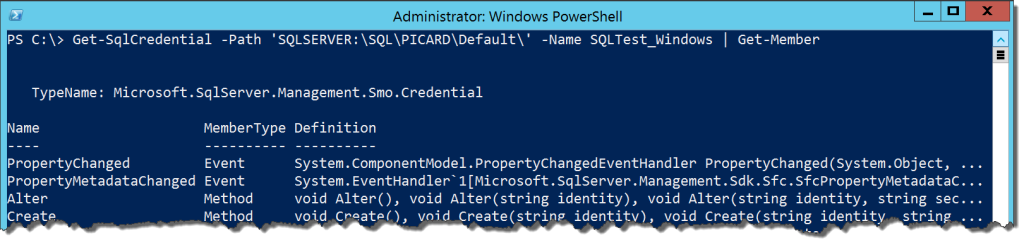

There’s one other cmdlet to look at relating to firewalls and that’s the Set-SqlNetworkConfiguration cmdlet. It is used to change the IP address port that instance is listening on. The parameters are similar to any other cmdlet using the Cloud Adapter Service, where you will need the instance name and a credential with permissions to alter the service:

Set-SqlNetworkConfiguration -ServerInstance PICARD -Protocol TCP -Port 13000 -ForceServiceRestart -Credential $cred

It’s a handy way to change your IP port. However, doing this has no impact on the firewall rule created by Add-SqlFirewallRule, as that firewall maps to the application executable for the instance.

Overall, these cmdlets can be useful, but I am not sure I would recommend them. The lack of fine grained control over how the rule gets created is a big concern for me. Add to this the dependency on the Cloud Adapter Service, which opens up another vector to accessing the server. We’re trading ease for control and the benefits do not outweigh the drawbacks. Since we already have a full set of Network Security cmdlets that allow us to manage firewall rules, I would not use the SQLPS module cmdlets.

I’m tweeting!

I’m tweeting!