Backups and restores are a key task for any database administrator. Our primary job is safeguarding the data and backups provide us with a redundant, point-in-time state of our data that we can use to recover from in the event of a Bad Thing(tm). Not taking backups is a great way to make sure your resume is up to date.

It’s no surprise that the SQLPS module has two cmdlets specifically designed for these functions, respectively named Backup-SqlDatabase and Restore-SqlDatabase. Go ahead and take a moment to look at the help files for these using Get-Help. The first thing you should see is there’s quite a bit of documentation for these two cmdlets. They have some of the most thorough help files for any of the SQLPS cmdlets.

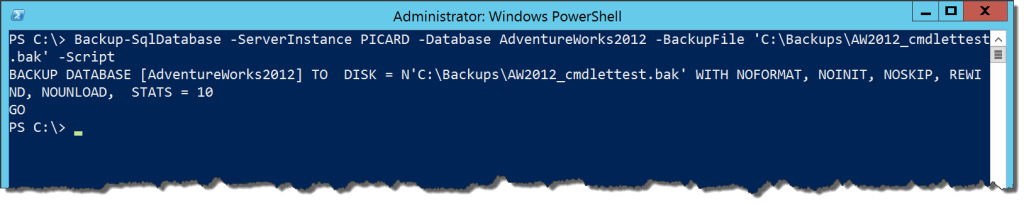

Backing up a database with Backup-SqlDatabase is easy, much like it would be if we were to use BACKUP DATABASE in T-SQL:

Backup-SqlDatabase -ServerInstance PICARD -Database AdventureWorks2012 -BackupFile 'C:\Backups\AW2012_cmdlettest.bak’

If you run this, you’ll note that you get a nice little progress bar for the backup action. Not to much else to worry about here, because it’s straightforward in how it operates. This cmdlet does run T-SQL behind the scenes, meaning we can use the -Script argument generate the T-SQL statement the cmdlet will run when it backs up our database:

Looks suspiciously like what you would get if you scripted out a backup action in SQL Server Management Studio, doesn’t it? We’re going back to the concept that the SQLPS components all use the SMO, meaning they work the same way as other tools that use the components, like SSMS. This becomes handy if you want to use the cmdlets to shortcut creating scripts for your backups.

Most of the other arguments for this cmdlet are simply additional options for the T-SQL BACKUP command. This means we can have the same level of customization of our backups as we would if we were writing straight T-SQL. I’ll let you explore those on your own and consider which options make sense and how to use them.

So why would we use this, if all it’s doing is duplicating T-SQL? Mostly because it becomes easier to integrate with Powershell in this manner, allowing us to use Powershell for what it’s good at it. One pattern I like to advocate around this is using Powershell to create backup directories as part of my backup process, then backup the database, then again use Powershell to remove old backups. Consider this script:

$dbs = dir SQLSERVER:\SQL\localhost\DEFAULT\databases | Where-Object {$_.status -eq 'Normal'}

foreach ($db in $dbs.name){

$backuppath = "C:\DBBackups\$db"

if(!(Test-Path $backuppath )){New-Item -ItemType Directory -Path $backuppath}

$backupfile = Join-Path -Path $backuppath -ChildPath "$db-$(Get-Date -Format 'yyyyMMddhhmm').bak"

Backup-SqlDatabase -ServerInstance LocalHost -Database $db -BackupFile $backupfile -Initialize

dir $backuppath | Where-Object {$_.FullName -ne $backupfile} | Remove-Item

}

This will dynamically backup all the user databases on the local instance with a unique name in its own directory. The script also uses Powershell to handle the file system tasks of creating the folder if it does not exist and cleaning up any backups outside of the one we just created. We have a simple, dynamic backup script all with a fairly minimal amount of coding.

The counterpart to this is the Restore-SqlDatabase. After all, our backups are not all that useful if we can’t restore them. The cmdlet is very similar to Backup-SqlDatabase with its arguments, where most of them are direct translations of the T-SQL RESTORE command options. One gotcha to call out is how relocating database files is handled.

Moving database files with a restore is a pretty common operation. The way the cmdlet handles it makes sense if you remember that everything is working through the SMO. What we need for moving files is a collection of RelocateFile objects, which the cmdlet will then use to establish the restore:

$MoveFiles = @()

$MoveFiles += New-Object Microsoft.SqlServer.Management.Smo.RelocateFile ('AdventureWorks2012_Data','C:\DBFiles\data\AdventureWorks2012New_Data.mdf')

$MoveFiles += New-Object Microsoft.SqlServer.Management.Smo.RelocateFile ('AdventureWorks2012_Log','C:\DBFiles\log\AdventureWorks2012New_Log.ldf')

Restore-SqlDatabase -ServerInstance PICARD -Database AdventureWorks2012New -RelocateFile $MoveFiles -BackupFile 'C:\DBBackups\AdventureWorks2012\AdventureWorks2012-201510101251.bak

As with our Backup-SqlDatabase cmdlet, we can see the T-SQL that would execute this by using the -Script argument.

Again, this cmdlet is useful by including within a larger script. I like using it for quick and dirty script generation for point in time restores:

$LastFull= Get-ChildItem 'C:\Backups\AdventureWorks2012\*.bak' | Sort-Object LastWriteTime -Descending | Select-Object -First 1

$logs = Get-ChildItem 'C:\Backups\AdventureWorks2012\*.trn' | Where-Object {$_.LastWriteTime -gt $LastFull.LastWriteTime} | Sort-Object LastWriteTime

$MoveFiles = @()

$MoveFiles += New-Object Microsoft.SqlServer.Management.Smo.RelocateFile ('AdventureWorks2012_Data','C:\DBFiles\data\AdventureWorks2012New_Data.mdf')

$MoveFiles += New-Object Microsoft.SqlServer.Management.Smo.RelocateFile ('AdventureWorks2012_Log','C:\DBFiles\log\AdventureWorks2012New_Log.ldf')

$db = 'AdventureWork2012New'

Restore-SqlDatabase -ServerInstance 'PICARD' -Database $db -RelocateFile $MoveFiles -BackupFile $LastFull.FullName -RestoreAction Database -NoRecovery -Script | Out-File 'C:\Temp\Restore.sql'

foreach($log in $logs){

if($log -eq $logs[$logs.Length -1]){

Restore-SqlDatabase -ServerInstance 'PICARD' -Database $db -BackupFile $log.FullName -RestoreAction Log -Script | Out-File 'C:\Temp\Restore.sql'

}

else{

Restore-SqlDatabase -ServerInstance 'PICARD' -Database $db -BackupFile $log.FullName -RestoreAction Log -NoRecovery -Script | Out-File 'C:\Temp\Restore.sql'

}

}

While it seems like a lot of code, it follows an understandable pattern. The first to Get-ChildItem calls collect our most recent full backup and any log backups that have occurred after that. Next up is to build our RelocateFile collection. Then we just run the Restore-SqlDatabase cmdlet with the -Script argument for each file, piping the output to a file. The result is a full restore script with all of our transaction log files sorted for us. This kind of script saves me a lot of time when I have to execute database restores and I can avoid wading through a lot of transaction log backups.

For my money, the Backup- and Restore-SqlDatabase cmdlets are two of the more useful functions from the module. They make it easy to combine my SQL Server work with the file system tasks that needed to happen as well. They also make it simpler because they reduce the amount of code I need to write to within Powershell, reducing the overall complexity of the work. While much of this could be done using straight T-SQL, leveraging the right parts of Powershell makes for robust and reliable processes.

I’m tweeting!

I’m tweeting!

[…] to create a collection of the non-TempDB system databases, then use ForEach-Object to run a Backup-SQLDatabase command for each of those. Five lines of code will back up system databases locally for as many […]

Backup-SqlDatabase creates a progress bar. The progress shows successfully but when it completes, if it’s part of a bigger script and there’s more output to the console or just simply it’s running through the rest of the script, the progress bar stays at 100% complete and never goes away. There is a way to not show the progress bar at all by using $ProgressPreference, but the progress bar is important to the user experience and I don’t want to disable that. Do you know of a way to actually close the progress bar after it’s done? I’ve tried multiple variations of a Write-Progress -Completed cmdlet; but none of them seem to work.

eg.

Write-Progress -id 1 -Activity “Backing up (Database: ‘$Database’ ; Server: ‘$SQLServer’ ; Action = ‘Database’)” -Status “100% complete” -Completed