Previously we went over how Azure handles regular backups of your Azure SQL Databases. It is such a huge weight off an administrator’s shoulders when we only have to worry about restoring a database if something goes wrong. While many DBAs might struggle with letting go of the need to control and manage their backups, I think most of us will embrace the freedom from the tedium and worry of keeping an eye on this part of our disaster recovery.

There still is a need to take a backup of some sort. Over my career, while I have used backups for protecting my data, I have also used them for other tasks. Sometimes it is to snapshot the data at a point in time, such as before a code release or a major change. Other times a backup can serve as a great template for creating a new application database, especially if you have a federated database model. Whatever your use case, there are times we would need to snapshot a database so we can restore from it.

Exports

One approach would be to just mark down the time you want to use as your backup and restore from there, but this approach could be difficult to control and be tricky to automate. Azure offers us a better option: exporting the Azure SQL database in question to blob storage. We can restore (or, more precisely, import) this export to a new database.

To run an export is a simple call to the Start-AzureSqlDatabaseExport cmdlet. Just like the restore cmdlet, it will start the process in the Azure environment, running in the background while we do other work. To run an export, we need the following information:

- Azure SQL database to export

- The administrative login for the server hosting your Azure SQL Database (which we will define as a SQL Server storage context)

- The storage container information

The only mildly frustrating thing with the export we need to use cmdlets from both the Azure module and the AzureRM module (assuming your storage blob is deployed using the resource manager model). Because of this, make sure you run Add-AzureAccount and Login-AzureRMAccount before you get started.

We first need to create a connection context for our Azure SQL Database instance, using a credential for our admin login and the server name/

$cred = Get-Credential $sqlctxt = New-AzureSqlDatabaseServerContext -ServerName msfazuresql -Credential $cred

Once we have established our SQL connection context, we will then need to set our storage context using a combination of AzureRM and Azure cmdlets.

$key = (Get-AzureRmStorageAccountKey -ResourceGroupName Test -StorageAccountName msftest).Key1 $stctxt = New-AzureStorageContext -StorageAccountName msftest -StorageAccountKey $key

Now we can then start the export. Notice, we need a name for the export, used in the BlobName parameter.

$exp = Start-AzureSqlDatabaseExport -SqlConnectionContext $sqlctxt -StorageContext $stctxt -StorageContainerName sqlexports -DatabaseName awdb -BlobName awdb_export

Since this only starts the export, we need a way to check on the status. We can check using Get-AzureSqlDatabaseImportExportStatus. Oddly enough, the status cmdlet requires the username and password to be passed separately and does not take a credential object.

Get-AzureSqlDatabaseImportExportStatus -RequestId $exp.RequestGuid -ServerName msfsqldb -Username $cred.UserName -Password $cred.GetNetworkCredential().Password

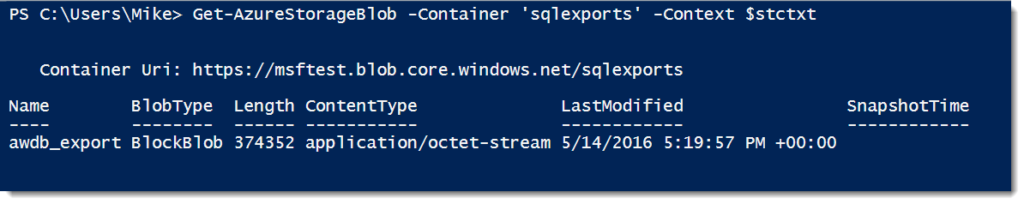

And then there is our blob.

There are two gotchas to keep in mind with both the export and the status. The first is you can not overwrite an existing blob, so make sure your blob name is unique (or get rid of the old one). Also, you can not check on the status of an export that has finished. If you get an error, chances are your export has completed.

Imports

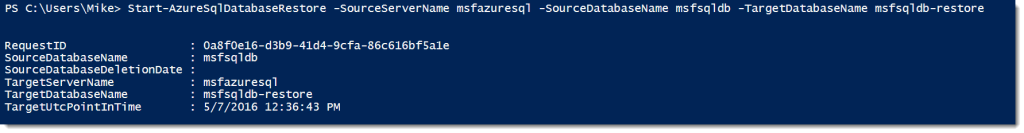

Once we have our export, we now have a “backup file” we can create new databases from. All we need to do is run an import of our blob. Just as for our export, we need some information for our import, which we will (unsurprisingly) run with Start-AzureSqlDatabaseImport.

- The storage container and blob that we will import from

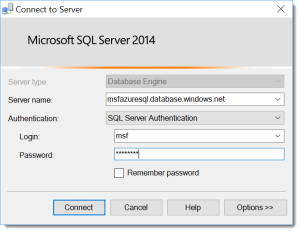

- A destination server and credentials for the server

- The name for our database

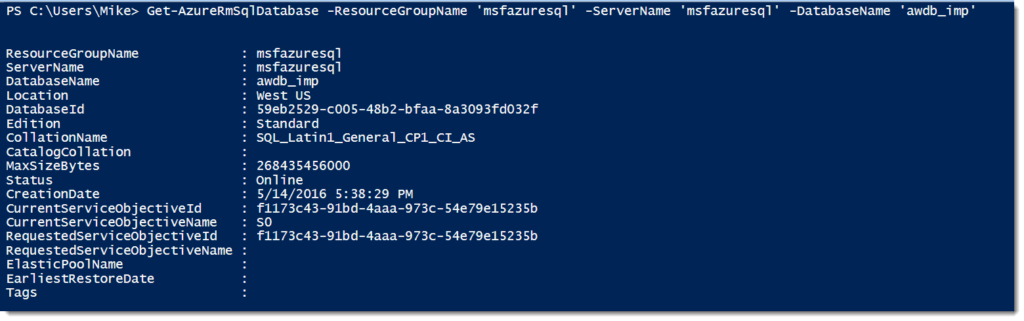

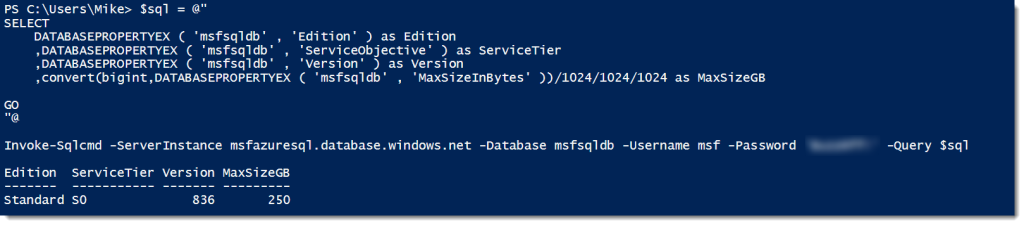

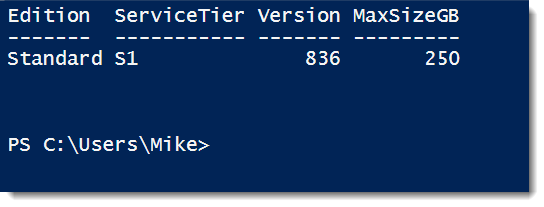

Now, since we are creating a new Azure SQL Database with the import, the process needs to define a service objective. By default, it will import the database at standard 0 (S0), but you can defined a higher or lower edition if you want. To simplify things, we will go with the defaults and use the contexts from above, so all we really need to do to kick off the import is:

Start-AzureSqlDatabaseImport -SqlConnectionContext $sqlctxt -StorageContext $stctxt -StorageContainerName sqlexports -DatabaseName awdb_imp -BlobName awdb_export

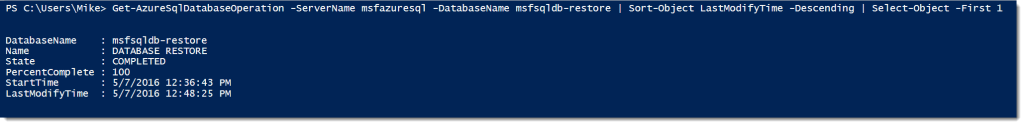

Which, when completed, gives us a new Azure SQL Database created from our export blob:

The Secret Sauce

What makes this black voodoo magic work? Is this some proprietary technique Microsoft has snuck in on us? Surprisingly, this is a bit of technology that have existed for sometime now as part of SQL Server Data Tools called BACPACs. A BACPAC is essentially a logical backup of a database, storing the schema and data as SQL statements.

This differs from a typical SQL Server backup, which stores your database pages directly in a binary format. Because of this, native backups are smaller and can be made/restored faster. However, they are more rigid, as you can only restore a native backup in specific scenarios. A logical backup, since it is a series of SQL statements, can be more flexible.

I don’t know why Microsoft went with BACPACs over some native format, but because they did,we can also migrate a database from on-premise SQL Server to Azure SQL database. This is a follow up to a common question I get: “How can I copy my database up to Azure SQL Database?” I want to look at this in my next post. Tune in next week, where we will create a BACPAC with regular SQL Server database and migrate it up to Azure SQL Database.

I’m tweeting!

I’m tweeting!