A couple weeks ago I came across an interesting flaw in SQL Server partitioning which was causing one of my tables to balloon in size. Essentially, we found that this large table in one of our databases had a lot of empty pages in it, pages that usually got cleaned up by the SQL Server engine. Unfortunately these pages were not getting removed and this 2 billion row table was about triple the size it should have been.

I won’t focus on the table to much, but there were two elements that created this problem: the table was a heap and the partition key was volatile (got updated frequently). I’m not yet sure if it’s a case of forward pointers or what other mechanic within SQL Server is behind this, but the result is that when we start moving data in a heap across partitions by changing the partition key, pages the data occupied were left behind and empty in the old partition.

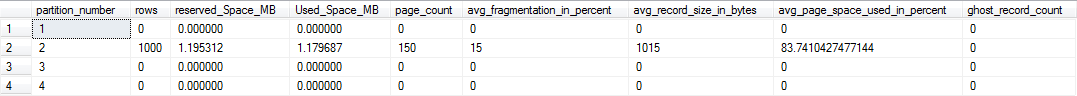

To test it, I built a basic heap table partitioned on an integer, then stuffed it with 1000 rows. I then queried some of the DMVs to see what the layout of the data was:

Partition 2 has 1000 rows in it, occupying 150 pages, exactly as we would expect. Average page use is ~83.74%, so fairly full. All in all, not unsurprising. What happens, though, if we change the partition key value on those 1000 rows and move it to partition 3?

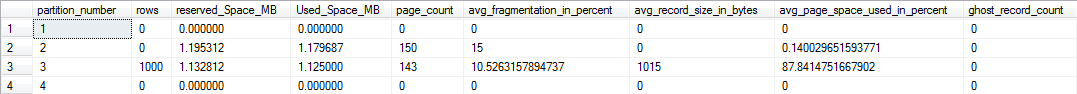

The 150 pages that the data used in partition 2 are still there! And we now also are using 143 pages in partition 3! The result of all this is that even though we’re not duplicating data, we’re duplicating space. Normally, SQL Server will detect these empty pages and remove them, but in this case they are not identified as empty.

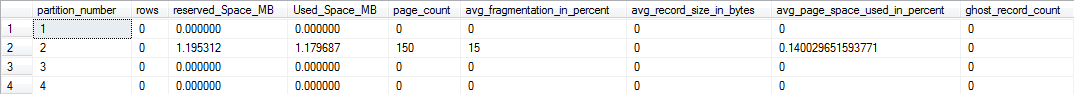

What would happen if, instead of updating our data, what happens if we simply delete the rows?

Even though we removed all our data from partition 2, those pages are still out there occupying space(note the avg_page_space_used_in_percent field). Obviously, this can quickly spiral out of control and become a major issue.

I did go through all these tests with a clustered index on the table and the results were much more positive. When I moved data from one partition to the next or deleted rows, the pages the data occupied were removed as expected, keeping the total size of the table down.

There are a couple lessons learned from this:

- Heaps are trouble! Based on the evidence we have here, it’s fairly evident that using a heap table causes all sorts of problems with partitioning. There are reasons the best practice of using clustered indexes in SQL server exists, this being one of them.

- Do not use a volatile partition key! Keep in mind that when we partition, we’re managing the physical structure under our table. In general, we want to avoid moving data around on our disk. If we choose a partition key that changes a lot, we’re creating a situation where our data moves around unnecessarily, which can cause us other problems even with a clustered index in place.

- Design is vital! The problems we had were created initially many years ago. At the time, things looked fine and there weren’t any noticeable issues. It wasn’t until much later, when the table had grown to several billion rows, that we had problems. You need to take care in designing out your structures, because fixing very large tables is weeks(if not months) of coordinated work and can require significant downtime to implement.

So there it is. I’m not sure if this is intended behavior or just an oversight. Most of the folks I talked to regarding this had not encountered this before, which is not unsurprising. The original table design violates several best practices. However, I do want to make people aware of this and I plan on filing a Connect item regarding this once I’ve had a chance to bounce it off a few more SQL brains.

For those interested, the scripts to reproduce my testing are:

I’m tweeting!

I’m tweeting!

It’s as if MS hates heaps. But sometimes they’re all you need. So I don’t get it.

I’ve seen this occur when deleting from a heap table (http://support.microsoft.com/kb/913399), but didn’t know that this also occurred when changing a partition key.

Thanks for linking that KB article, Kevin. So this has been around since SQL 2000? It appears to fit the pattern I was seeing.