Managing SQL server logins, especially in the days of availability groups, can be quite the pain in the butt. Usually we’ll make use of scripts like sp_help_revlogin to manage this, copying logins en masse from one instance to another. This works, but it’s pretty brute force and there might be logins you may not want to copy across.

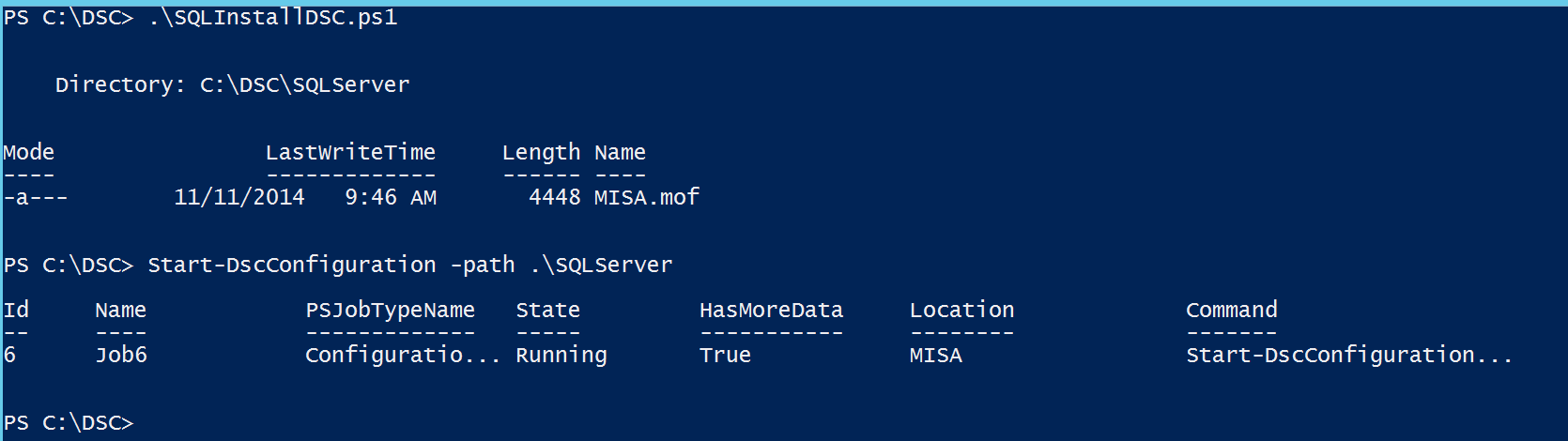

So let’s use Powershell to be a little more…elegant. There’s a couple things we can use to get a nice login copying function that is flexible and robust. The first is the .Script() method in the SMO’s Login object. This is actually a piece of functionality many of us use daily, whenever we script out objects in SQL Server Management Studio. The trick is to access this programmatically, which is a snap in Powershell. The basic syntax is something like this:

[System.Reflection.Assembly]::LoadWithPartialName('Microsoft.SqlServer.SMO') | out-null

$smoserver = New-Object -TypeName Microsoft.SqlServer.Management.Smo.Server localhost

$smoserver.Logins[‘SHION\mike’].Script()

What’s awesome about this method is the SMO will also script out all of the additional server level options, like default database and server roles. Very handy for maintaining the consistency of the logins from one instance to another.

There are a couple of gotchas. First , if we want to include the SID in our script, we have to create a ScriptingOptions object and specify that the LoginSid should be included. Second, if the login is a SQL Server login, the .Script() method will add in a line to disable the login and give it a completely random password. This is done for security reasons, so that any login scripted out can’t create a possible security hole. As an FYI, you’ll get the same if you script out the login in SSMS.

So what do we do? Managing passwords across instances is tricky, but must be done, otherwise things will start to break. With Windows based logins it’s simple, since that’s all managed in the Active Directory. SQL logins require more care. To handle this in T-SQL, you’ll want to create the login with the SQL Server password hash. Unfortunately, the SMO doesn’t provide an easy way to do this, so we have to get clever. To be clever, we have to:

- Retrieve the binary hash value from the source SQL instance.

- Convert the binary hash to a string for use in a CREATE LOGIN statement.

- Insert the password hash string into our CREATE LOGIN statement in the correct place.

Easy enough, right? Honestly, all it takes is some string manipulation, which Powershell is pretty darn good at. Certainly better than SQL Server itself. Turns out this is the biggest hurdle to the process and, once solved, the rest of the script comes together quite nicely.

The following function brings all these concepts together. It’s a little more complex than other scripts I’ve posted, but here’s the highlights:

- You must declare a source instance. This is the instance from which all logins will be scripted from.

- You can specify a string array/collection of logins. The function will only script out the logins you list. If you don’t list any (argument is null), the function will script out all non-system logins (everything but sa, pretty much).

- Because I like the pattern of scripts to build scripts, the function will create a .sql script of all your create login statements. By default this will go to your My Documents folder, but you can specify another directory if you want.

- If you provide an instance to the ApplyTo parameter, the function will attempt to create the logins on that instance if they don’t exist. Pass the -Verbose switch if you want to see which ones it creates and which ones it skips.

function Copy-SQLLogins{

[cmdletbinding()]

param([parameter(Mandatory=$true)][string] $source

,[string] $ApplyTo

,[string[]] $logins

,[string] $outputpath=([Environment]::GetFolderPath("MyDocuments")))

#Load assemblies

[System.Reflection.Assembly]::LoadWithPartialName('Microsoft.SqlServer.SMO') | out-null

#create initial SMO object

$smosource = new-object ('Microsoft.SqlServer.Management.Smo.Server') $source

#Make sure we script out the SID

$so = new-object microsoft.sqlserver.management.smo.scriptingoptions

$so.LoginSid = $true

#set output filename

$filename = $source.Replace('/','_') + '_' + (Get-Date -Format 'yyyyMMddHHmm') + '_logins.sql'

$outfile = Join-Path -Path $outputpath -ChildPath $filename

#If no logins explicitly declared, assume all non-system logins

if(!($logins)){

$logins = ($smosource.Logins | Where-Object {$_.IsSystemObject -eq $false}).Name.Trim()

}

foreach($loginname in $logins){

#get login object

$login = $smosource.Logins[$loginname]

#Script out the login, remove the "DISABLE" statement included by the .Script() method

$lscript = $login.Script($so) | Where {$_ -notlike 'ALTER LOGIN*DISABLE'}

$lscript = $lscript -join ' '

#If SQL Login, sort password, insert into script

if($login.LoginType -eq 'SqlLogin'){

$sql = "SELECT convert(varbinary(256),password_hash) as hashedpass FROM sys.sql_logins where name='"+$loginname+"'"

$hashedpass = ($smosource.databases['tempdb'].ExecuteWithResults($sql)).Tables.hashedpass

$passtring = ConvertTo-SQLHashString $hashedpass

$rndpw = $lscript.Substring($lscript.IndexOf('PASSWORD'),$lscript.IndexOf(', SID')-$lscript.IndexOf('PASSWORD'))

$comment = $lscript.Substring($lscript.IndexOf('/*'),$lscript.IndexOf('*/')-$lscript.IndexOf('/*')+2)

$lscript = $lscript.Replace($comment,'')

$lscript = $lscript.Replace($rndpw,"PASSWORD = $passtring HASHED")

}

#script login to out file

$lscript | Out-File -Append -FilePath $outfile

#if ApplyTo is specified, execute the login creation on the ApplyTo instance

If($ApplyTo){

$smotarget = new-object ('Microsoft.SqlServer.Management.Smo.Server') $ApplyTo

if(!($smotarget.logins.name -contains $loginname)){

$smotarget.Databases['tempdb'].ExecuteNonQuery($lscript)

$outmsg='Login ' + $login.name + ' created.'

}

else{

$outmsg='Login ' + $login.name + ' skipped, already exists on target.'

}

Write-Verbose $outmsg

}

}

}

There is one additional piece to this, and that’s a helper function for converting the binary hash value to a string. It’s pretty simple, taking the value passed to it and doing some formatting magic to get a value for the SQL statement.

function ConvertTo-SQLHashString{

param([parameter(Mandatory=$true)] $binhash)

$outstring = '0x'

$binhash | ForEach-Object {$outstring += ('{0:X}' -f $_).PadLeft(2, '0')}

return $outstring

}

Bring this all together and now we have a straightforward function we can call to help us manage our logins. Some examples:

#Create a SQL Script in My Documents for all logins

Copy-SQLLogins -source 'localhost'

#Create a SQL Script in My documents for the passed logins

Copy-SQLLogins -source 'localhost' -logins @('SHION\mike','test','pcuser')

#Create a SQL Script in My documents for the passed logins and try and create these logins on the target SHION\ALBEDO

Copy-SQLLogins -source 'localhost' -logins @('SHION\mike','test','pcuser') -ApplyTo 'SHION\ALBEDO' -Verbose

Hopefully this helps you in your day to management of your instances. I’ve used this script for migrations and managing my Availability Group nodes. In my opinion, it’s a heck of a lot cleaner than sp_help_revlogin (a venerable tool in its own right, to be sure). As always, this along with my other scripts, can be found up on my GitHub repository

I’m tweeting!

I’m tweeting!